Accelerating Responsible Use of De-identified Data in Algorithm and Product Development

Digitization of health care continues to yield ever-growing data sources that offer, together with advancements in analytics and machine learning, significant opportunities for breakthroughs in care delivery and bio-medical discovery. While there are detailed regulatory and industry guidelines for handling individually identifiable data, de-identified data is typically not subject to privacy laws.

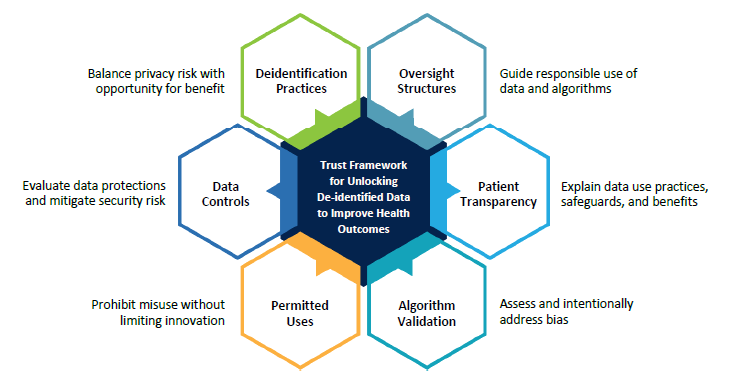

Organizations struggle to determine how to balance privacy and security safeguards with innovative use of de-identified data in algorithm and product development. This is a challenging balance, which requires industry stakeholders to engage in good faith in establishing trust between institutions that produce data and those that use data. To that end, the Trust Framework for Accelerating Responsible Use of De-identified Data in Algorithm and Product Development is a necessary first step in establishing fair and achievable guidelines.

The Health Evolution Forum developed this framework with the intention that its enduring principles will serve as the groundwork for industry-leading organizations and coalitions to build upon this effort and chart the path forward in an ever-evolving technology, regulatory, and business environment.

Download the Trust Framework for Accelerating Responsible Use of De-identified Data in Algorithm and Product Development

Thank You

Health Evolution Forum is grateful to the Work Group on Governance and Use of Patient Data in Health IT Products for their commitment, expertise, and contributions to this effort.

Contact

For inquiries about the Trust Framework, please reach out to Ye Hoffman at YeH@healthevolution.com.

Thank you to our Work Group Partners