At Health Evolution’s 2025 Summit, Dr. Mehmet Oz made his first public appearance as...

Leadership Profile: Headway’s Olivia Davis

Olivia Davis is a senior executive overseeing health plan partnerships, network strategy, and communications...

Advancing Health Equity with Data and Bold Commitment

Long-standing approaches to measuring quality of care have masked dramatic and disturbing variances in...

From Pledge to Action: How Leading Organizations Joined Together to Reduce Health Disparities

Despite increased awareness of health care’s deep-rooted disparities in recent years, many health care...

The Quest for Commercial Risk: How To Unlock Win-Win Arrangements

Costs in the commercial health insurance market are rising at an unsustainable rate for...

Designing Win-Win Commercial Risk-Based Contracts

Value-based payment (VBP) arrangements with two-sided risk are significantly less common in the commercial...

Inspiring Change in Health Care: Cross-Industry Insights from the 2024 Fellowship Year

Health Evolution empowers positive change in health care by convening a curated community of...

What to expect at the upcoming 2024 Health Evolution Connect

Health Evolution is gearing up for 2024 Connect, which will take place September 23-25 in...

‘An opportunity for radical change’: How leaders can realize an AI-powered future for health care

At Health Evolution’s annual Summit, CEOs from leading health systems, health plans, and life...

Principles of Accountability in Mental and Behavioral Health Care Framework

The United States' mental and behavioral health crisis is intensified by a lack of...

Leadership Profile: PT Solutions’ CEO Dale Yake

Dale Yake, CEO of PT Solutions, has been a transformative force in the field...

The New Strategic Landscape: Top Priorities for Health Care CEOs—and Investors

At Health Evolution Summit, CEOs from across the health care industry come together to...

Advancing Equity in Chronic Condition Care: Insights from Top Health Care Leaders

Chronic diseases pose an acute and growing challenge for the United States’ health care...

Lessons in Leadership: How to Empower Resiliency and Drive Impactful Change

Each year, Health Evolution Summit brings together an unparalleled community of influential CEOs from...

Celebrating 20 Years of Impact: Reuniting The Office of the National Coordinator for Health Information Technology (ONC)

The Office of the National Coordinator for Health Information Technology (ONC) recently celebrated two...

Leading Pharma Forward: How Health Care CEOs Can Ignite Innovation and Transformation

At Health Evolution’s 2023 Connect, health care executives convened to address crucial issues across...

Transforming Rural Health Care: A Roadmap for Collaboration and Change

At Health Evolution’s 2023 Connect, industry experts came together to explore the pressing challenges...

Revolutionizing Mental Health Support: A Strategic Imperative for Health Care CEOs

At the forefront of modern health care, leaders strive for a unified mental health...

The Golden Rule in Health Care Leadership: An Interview with Amedisys’ Paul Kusserow

Health Evolution CEO Richard Schwartz recently interviewed Paul Kusserow, a distinguished health care executive...

Leadership Profile: Sutter Health’s Tosan Boyo

Tosan Boyo is the President of Sutter Health’s East Bay Market. He joined Sacramento-based...

Health Evolution Data Trust Framework Serves as Basis for New Joint Commission Certification Program

Health Evolution is excited to announce that “The Trust Framework for Accelerating Responsible Use...

Leading an AI-Powered Future: What Health Care Executives Need to Know

AI’s dramatic impact has become a central topic for leaders across the health care...

Measuring Outcomes in Mental and Behavioral Health: Insights from Health Evolution’s Roundtable on Innovations in Mental and Behavioral Health

As rates of unmet mental and behavioral health needs continue to rise in the...

‘Something Drastic Needs to Change’: Reimagining a New Future for Specialty Care

Primary care long has been at the center of value-based contracts. But as the...

‘Be Relentless’: How to Lead and Advance Health Equity Amid Rising Polarization and Politicization

Across the past two years, the health care industry has faced a series of...

Meet Gabe Stein: The CEO with a Talent for Revolutionizing Health Care

Gabe Stein, CEO of ECLAT, brings an impressive track record of over 20 years...

Inspiring Change in Health Care: Cross-Industry Insights from the 2023 Fellowship Year

Health Evolution empowers positive change in health care by convening a curated community of...

Organizational Profile: MetroHealth

Airica Steed, EdD, RN, FACHE, is the President & CEO of The MetroHealth System,...

Accelerating Responsible Use of De-identified Data in Algorithm and Product Development

Accelerating Responsible Use of De-identified Data in Algorithm and Product Development Digitization of health...

Connect Spotlight with Jonathan B. Perlin, MD, PhD, MSHA, MACP, FACMI

Jonathan B. Perlin, MD, PhD, MSHA, MACP, FACMI, has made great strides during his...

How health care leaders are navigating the industry’s top challenges—and opportunities

At Health Evolution’s 2023 Summit, CEOs from health systems, health plans, and life sciences...

CEO Profile: Arrive Health’s Kyle Kiser

Kyle Kiser became CEO of Arrive Health in 2021 after serving as its President...

Advancing food as medicine is critical. Cross-industry partnerships can make it sustainable.

The movement to end food insecurity and improve nutrition in the United States is...

CEO Profile: League’s Mike Serbinis

Michael Serbinis is the founder and CEO of League. He is known as an...

CEO Profile: GeBBS Healthcare Solutions’ Milind Godbole

Milind Godbole, fondly known as MG, defines his career by his belief that profitability...

How organizations can build ‘best-in-class’ personalized health care

Health care is entering a new era of personalization at scale. Cross-industry partnerships and...

Advancing Value-Based Specialty Care: Insights from Health Evolution’s Roundtable on Value-Based Care for Specialized Populations

According to the Health Care Payment Learning & Action Network’s (LAN) 2022 Measurement Effort,...

How Medicare Advantage is transforming health care—and 3 ways the program must evolve

There’s been enormous growth in Medicare Advantage (MA). A Kaiser Family Foundation analysis of...

2023 Summit: Health Evolution Fellows Collaborate to Confront Top Industry Challenges

At our recent 2023 Summit in April, Health Evolution Fellows participated in exclusive conversations...

What to expect at the upcoming 2023 Health Evolution Connect

Health Evolution is gearing up for 2023 Connect, which will take place September 27-29...

Unpaid caregivers are key to successful home-based care. Here’s how to support them.

One of the practical challenges to successfully implementing home-based care models is the responsibility...

Summit Spotlight with SCAN’s Sachin Jain, MD

As a leader on the forefront of Medicare Advantage, Sachin Jain, MD, CEO of...

Advancing equity in mental and behavioral health: Three ways to begin, according to top health care leaders

The United States is grappling with a mental and behavioral health care crisis. In...

Summit Spotlight with Mayo Clinic’s Gianrico Farrugia, MD

Gianrico Farrugia, M.D., leads one of the nation's leading hospitals, Mayo Clinic, which cares...

Summit Spotlight with Carladenise Edwards, PhD

Carladenise Edwards, PhD, is an accomplished health care executive with nearly three decades of...

Health Evolution Responds to NIH Request for Information to Address Mental Health Disparities

Health Evolution and our community of influential cross-industry leaders are committed to making the...

Summit Spotlight with EmblemHealth’s Karen Ignagni

During Karen Ignagni's tenure as CEO of the EmblemHealth family of companies, she has...

What to expect at the upcoming 2023 Health Evolution Summit

Health Evolution is gearing up for Summit 2023, which will take place April 19-21...

Connect 2022 Agenda

Helping Health Care Executives Achieve Their Transformation Goals. Health Evolution is announcing Connect, an...

Bias in, bias out: Responsible use of data to advance innovation and equity

Health Evolution Fellows outline why addressing bias in data is critical and seek input...

Moving from pilot to scale in digital health and home-based models of care

Health Evolution Fellows discuss the critical elements of scaling emerging technologies across the care...

Innovator CEO profile: Clarify Health’s Jean Drouin

Powered by Clarify Health Drouin discusses the company’s origin story, the accomplishments he’s most...

Applying Medicare Advantage lessons learned to commercial populations

Health Evolution Fellows discussed ways that CEOs can lead the transition to focus on...

Lessons learned in advancing equity: Maternal health

Executives supporting the Health Equity Pledge virtually convened to share experiences and learnings in...

How to improve consumer experience with digital strategies

Powered by CitiusTech At the 2022 Health Evolution Summit, executives discussed tactics leaders can...

The case for addressing climate change and health equity together

At the 2022 Health Evolution Summit, CEOs shared lessons learned from sustainability work and...

How mental health care and social determinants intersect

Health Evolution Fellows share their perspectives on bringing care to people experiencing homelessness, using...

Innovation and the competitive landscape for primary care: ‘We have to learn to disrupt ourselves’

CEOs discuss how primary care is changing, potential winners and losers, cross-industry advice they...

The next era of value-based care: Accelerating progress in the years ahead

As health care industry leaders look to the next phase of value-based care, success...

The most important lesson health care CEOs should learn from retail: An interview with Walgreens Boots Alliance CEO Roz Brewer

Brewer discusses her commitment to inclusion how she thinks about culture, what the future...

Why it’s time to change the narrative around mental health and social media

Social media platforms are often cited for negatively impacting the mental health of young...

Five tactics to unlock the potential for data and analytics to improve care experiences and outcomes

Powered by Optum Executives from Boulder Community Health, Optum and UnitedHealth Group shared lessons...

Innovator CEO profile: Omada Health’s Sean Duffy

Powered by Omada Health Duffy discusses Omada’s recent $196 million funding round, the accomplishment...

Chris Chen on how surviving COVID-19 changed him as a CEO

https://vimeo.com/641231777 Chen discusses his life-altering experience being infected with the coronavirus, what he learned...

SCAN Group and Health Plan CEO Sachin Jain on the art of the possible

https://vimeo.com/638756912 In this spotlight on Forum Fellows, Jain discusses the organization’s work to transform...

Here’s a glimpse inside the invitation-only Forum convening at the 2022 Health Evolution Summit

CEOs and thought leaders shared perspectives on primary care, the future of risk, digital...

The Great Resignation: An opportunity to improve the health care workforce?

At the 2022 Health Evolution Summit, health system CEOs discussed ways to leverage the...

2022 Connect Overview

Helping Health Care Executives Achieve Their Transformation Goals. Health Evolution is announcing Connect, an...

Most health care and research doesn’t operate on big data — that needs to change

Experts at the 2022 Health Evolution Summit discussed the power of big data, challenges...

2022 Connect – On-site Info

Welcome to the inaugural Health Evolution Connect! We encourage you to take advantage of...

Life sciences CEOs share 5 priorities to maintain beyond the pandemic

Executives at the 2022 Health Evolution Summit outlined how COVID-19 has advanced their consciousness...

Nuance CEO Mark Benjamin: AI is about ‘people caring for people‘

Benjamin discusses the grand vision for leveraging artificial intelligence to connect the dots across...

Working toward health equity in life sciences: An interview with Takeda’s Julie Kim

Kim discusses being a woman of color in the C-suite, why choosing the more...

‘Climate anxiety’ is on the rise. Here’s how leaders can address the mental health crisis

Climate change is associated with an increase in behavioral health problems that will only...

The future of virtual-first care models: 3 considerations

Virtual-only and virtual-first care services are succeeding in the right situations, but even companies...

Update: Health Evolution Summit 2022 agenda

Here are the overarching themes, latest programmatic additions to the Summit’s CEOs, policymakers and...

Why Strong Black Women need mental health care, too

In this guest opinion piece, Nashville General CEO Joseph Webb considers data points that...

How Kaiser Permanente wrote the playbook for KP at Home — while building the program

The health system, much like Mayo Clinic, Intermountain and others, is moving more care...

The next critical step to advancing health equity? Improving data collection

Health Evolution Fellows and executives on the front lines of advancing health equity share...

InstaMed and J.P. Morgan’s Bill Marvin: Leveraging payment advancements can improve patient experience

Powered by InstaMed Marvin discusses what the company has learned from tracking payment trends...

Lyft Healthcare executive on value-based care, ROI, and addressing social determinants of health

Buck Poropatich discusses how the enterprise is helping to close gaps in care, reducing...

Connect PRE-REGISTRATION

HEALTH EVOLUTION CONNECT PRE-REGISTRATION Thank you for pre-registering for 2022 Connect. You can send...

Providence Health Plan CEO on top three priorities for transforming health care

Don Antonucci spoke with Health Evolution about the most critical tactics the health plan...

AI regulations are coming: Health care has a window to reverse course and address bias now

Taking steps to identify and reduce bias in algorithms will enable health care organizations...

Edwards Lifesciences CEO Mike Mussallem on balancing innovation, ethics and resilience

Mussallem discusses forging unique partnerships amid crises, medical advancements he’s seen throughout his career,...

Executives share three tactics for helping underserved communities emerge from COVID-19

Leaders serving vulnerable populations have learned areas of focus that can help other organizations...

Innovator CEO Profile: AliveCor’s Priya Abani

Powered by AliveCor Abani discusses the company’s origin story, it’s expansion toward a global...

Frontline workers are stressed to the brink. Here’s what they need from leadership

In today’s pandemic conditions, employees often feel overworked and underpaid and many indicate that...

Guest column: Honoring Black History Month and Martin Luther King, Jr. with advocacy addressing inequalities in health care

Nashville General CEO Joseph Webb calls on physicians, politicians, community and public health leaders...

Early lessons learned: Intermountain transitioning its health at home model from a pandemic response into a long-term vision

After assembling the program in a matter of weeks, the health system has both...

What will it take to fix the U.S. mental health crisis? ‘A social movement’

Tom Insel, former Director of the National Institute of Mental Health and noted neuroscientist...

Health Execs on the Move: Cohen lands at Aledade; OU Health names new CEO

Former Secretary of Health and Human Services for North Carolina, Mandy Cohen, MD lands...

How a $20 billion company stays nimble during the pandemic

BD’s CTO Beth McCombs discusses the company’s innovation strategy during the pandemic and its...

Allina Health has turned to digital health to solve a mental health crisis

Across the country, providers are facing a mental health provider shortage at a time...

Walgreens CEO Roz Brewer on improving the patient care journey

Brewer discusses her first year as chief executive of Walgreens Boots Alliance, the potential...

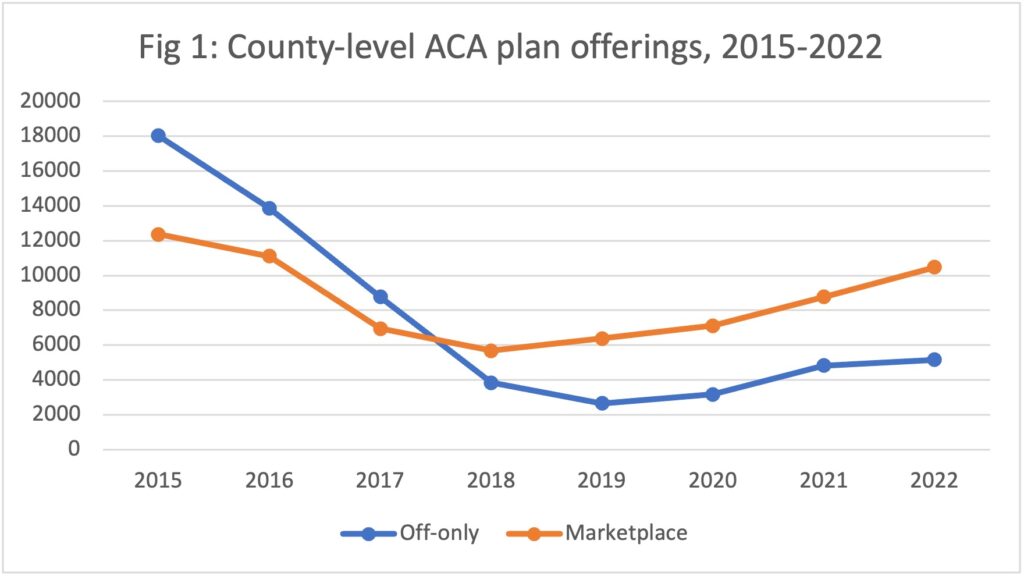

Data dive: ACA enrollment hits records as more insurers offer plans

The Affordable Care Act saw a record number of enrollments for 2022 and insurers...

‘Talked over and interrupted’: Gender gap in health care leadership is problematic

Health care’s gender gap is as wide as ever, as only 15% of health...

Health Evolution expands Forum with new initiatives, partners and Fellows

The Forum, a cross-industry collaboration of more than 200 CEOs and other health care...

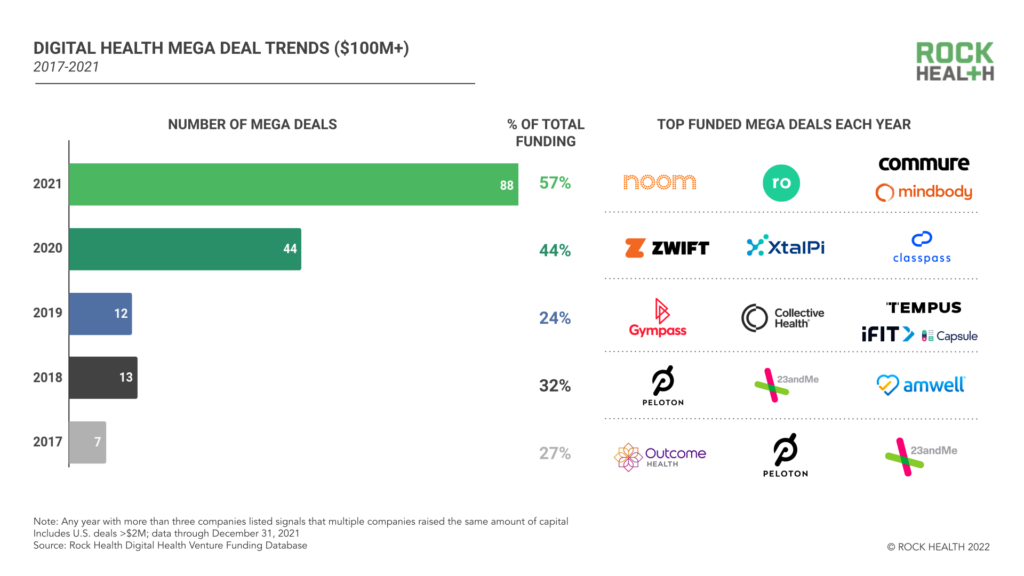

2021 was a record-breaking year for digital health funding. Will 2022 top it?

Why two physicians-turned-investors are expecting the digital health market to level off in 2022...

Accelerating the transition to value-based care

Powered by Navvis With decades of experience in value-based care, Florida Medical Clinic CEO...